This is the last installment of a three-part imgix blog series covering streaming video: its value, how adaptive bitrate streaming makes it work, and the HLS/LL-HLS protocol. imgix is releasing a streaming video optimization and hosting solution — if you foresee a need for this, contact us at support@imgix.com.

Regardless of how tasty the steak, it’s the sizzle that sells. That’s why you make sure the images on your website are optimized for fast page loads and high resolution. The perfect photo won’t impress anyone, let alone generate clicks if viewers have to wait too long to see it or if it’s not sharp. And as you add more video content to your website, you have to pay equal attention to optimizing that content as well.

Your audience demands an excellent user experience: uninterrupted video streams at the best possible resolution on any kind of device. In our last blog, we wrote about the importance of adaptive bitrate streaming (ABS) as a key element of optimizing the user experience when watching videos. If you haven’t read it, please do, it may help you follow what’s in this blog more easily.

Here, we explain a bit about HLS and its adaptive bitrate capability. For many reasons, it’s the most common method that developers use for getting top-quality video playback on everything from mobile devices to 4K TVs.

What is HLS?

Apple came out with the HLS protocol, a method for transmitting video content between web servers and client devices, in 2009 after it decided to move away from Flash. The new protocol would be the default for iOS, Mac OS, and Apple TV. It was entirely compatible with HTML5 and although its acronym includes “live” streaming, it worked for any video on demand. Given these characteristics, it’s not surprising it slowly but surely became the global standard in video streaming protocols.

How does HLS work?

All web servers use HTTP, and HLS works by breaking MP4 videos into short chunks that a web server indexes. While most browser apps work by repeatedly sending HTTP requests and responses back and forth, it’s different with video streaming. Once the browser opens a port for the video stream, it leaves it open until all the short chunks are received and the stream is complete.

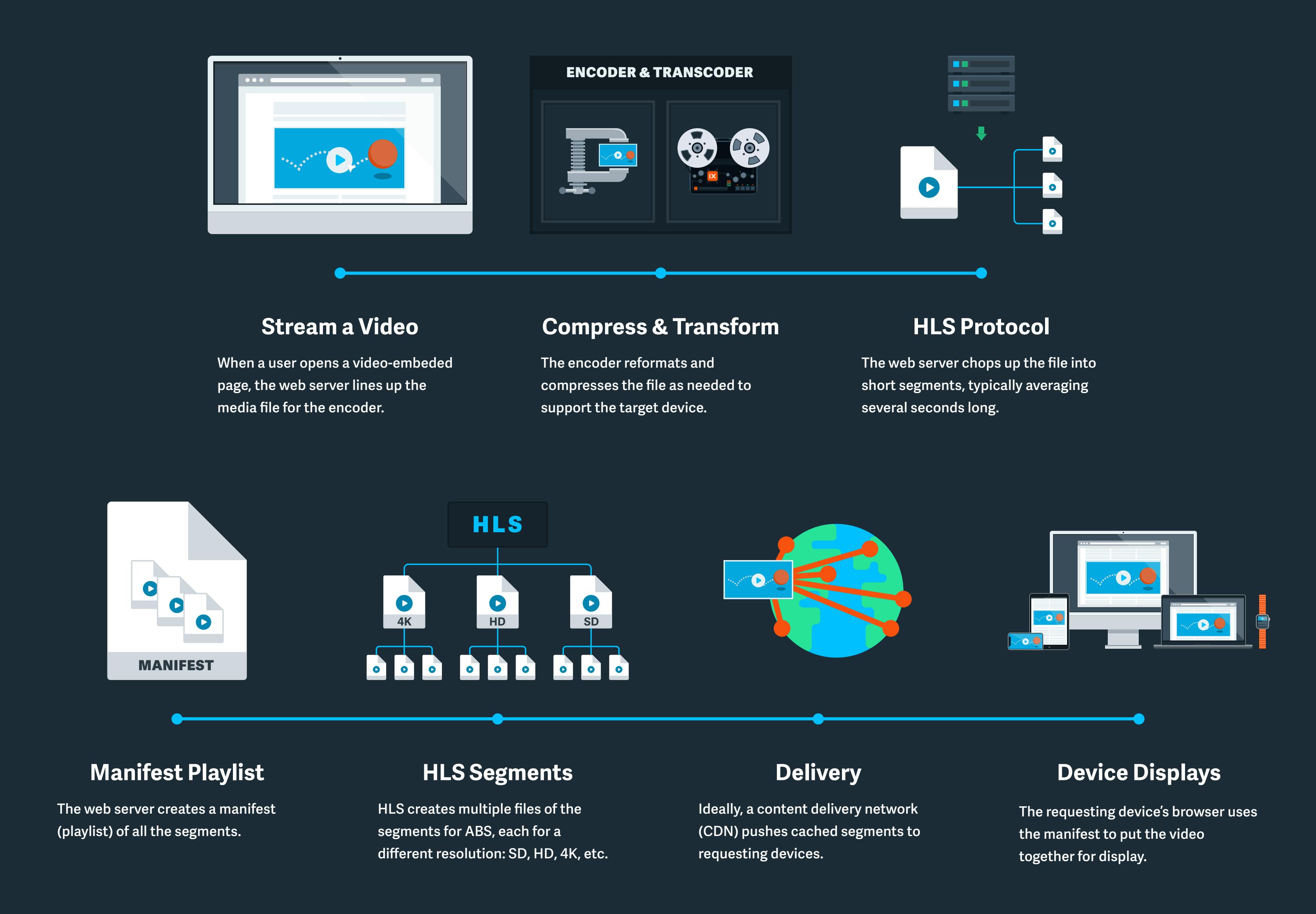

Here’s a step-by-step of what’s involved:

- After someone clicks a link to watch a video, the web server responds by lining up the media file for the encoder/transcoder. Encoding is compressing, transcoding is changing formats.

- The encoder reformats and compresses the file as needed to support the target device.

- Using the HLS protocol, the web server chops up the file into short segments, typically averaging several seconds long.

- The web server creates a manifest (playlist) of all the segments.

- HLS creates multiple files of the segments for ABS, each for a different resolution quality: SD, HD, 4K, etc.

- Ideally, a content delivery network (CDN) pushes cached segments to requesting devices. The CDN typically uses a worldwide network of servers to enable a geographically-close source for faster downloads.

- The requesting device’s browser uses the manifest to put the video together for display.

Below is an example of a video being converted using the imgix Video API and streamed using our <ix-video> video player web component:

You can read our documentation to learn how to create the web friendly player above.

Why HLS sets the standard for high-quality streaming video

By itself, ABS makes the difference between an unpredictable, sometimes completely unacceptable user experience and smooth, highest-possible-quality video sessions. HLS takes care of ABS and delivers a lot more as well.

- Most modern operating systems support HLS, meaning it works with virtually every smartphone, tablet, laptop, desktop TV, and connected device on the market.

- Its HTML5 compatibility means it’s easy for developers to integrate new apps and features.

- Because it’s the de facto standard, site visitors don’t need to invest in different devices and apps to watch content and providers can use off-the-shelf web servers. It is, by far, the most cost-effective streaming protocol available.

The low-latency solution

When HLS first rolled out, it was an exceptional protocol for video-on-demand where latency wasn’t critical. Other protocols served live streams such as sports and interactive events with near-real-time delivery. However, in 2019 at the Apple Developer’s Conference, Apple made two stunning announcements: it had developed LL-HLS, a fast, low-latency HTTP live streaming capability, and that it had added LL-HLS to the standard HLS protocol.

The existing low-latency protocols were fast. However, they didn’t provide the high quality that HLS, with its ABS, could offer. HLS was originally designed for quality rather than speed; the other protocols were designed for speed rather than quality. HLS added speed to offer the best possible customer experience regardless of the network environment, operating system, or hardware.

The future of streaming videos

It might be a cliché, but 5G is truly going to change everything. Where there’s coverage, 4K videos will download in a snap with speeds more than 10x faster than existing 4G. And 5G is what brings out the potential of edge computing by bringing the most powerful processing capabilities closer to the end user.

What this means to content providers like you is that you’re going to have to start thinking about how you’re going to load up with 3D, augmented, and virtual reality experiences. Customers will want to virtually pick up your product, turn it upside down, see how it looks in their living room, and so on. Of course, the hype is thick now and the reality, in practical terms, is years away. You’ve got some time to get ready.

Thank you for reading, and in case you missed them, check out the previous blogs in this series:

- Streaming video — why it’s essential and what it takes to make it effective

- Adaptive bitrate streaming — what you need to know

And again, if you’re thinking about how imgix can deliver a top streaming video service, contact us at support@imgix.com.